#FREE PROXY WEBSITE LIST FREE#

Since free proxy lists are free, each proxy protection may not be of high quality. Why free proxies should be avoided? #ĭespite the apparent availability of free proxies, they have a number of drawbacks that prevent them from being used in production. Furthermore, it allows you to create your proxy collector in a short time (even using a different programing language).

#FREE PROXY WEBSITE LIST CODE#

Proxy-scraper contains only two code files: proxyScraper.py and prox圜hecker.py which perform each own job and interact through the output file (by default it is output.txt).ĭespite its simplicity and lack of extra functionality, this project demonstrates well the main aspects of the proxy scraper group. It has not too many features or integrations, but it is a great open-source project to start building your solution. Proxy-scraper is a simple CLI tool built using Python. proxy-scraper - starting point to build your own proxy scraper # Check out more information in the official documentation.

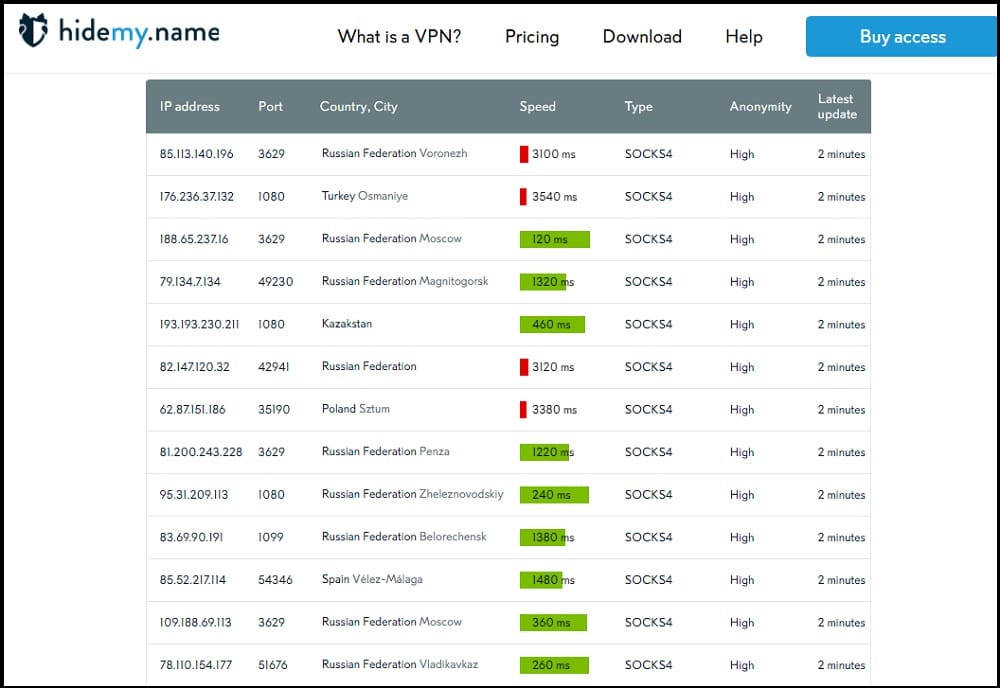

ProxyBroker can also be integrated in your Python code, so it is easy to extend it with your functionality. 0.1 -port 8888 -types HTTP HTTPS -lvl High -countries US Copy The 4th point means, that Scylla installation as easy as the following line of the code: Scrapy and requests integration with only 1 line of code minimally.Automatic proxy IP crawling and validation.Also, Scylla is under active development, so the project contributors will constantly deliver new features and fix bugs. It combines proxy crawler, checker and HTTP forward proxy all-in-one. Scylla is one of my favourites across all the projects I've seen. Let's check out the most popular and promising open-source tools from this category. More extended proxy scrapers may collect metainformation about proxies like country, average latency, anonymity, etc., and provide an ability of a proxy rotation along with HTTP proxy forwarding, so you don't need to manage connection for each of the collected IP addresses. The second part is a checker - function that iterates through the whole database of harvested proxies and tries to perform requests using each of them. The first function is usually implemented by crawling through the pre-defined list of free proxy websites and collecting IP/port information from them. Proxy scraping tools #Įach good proxy scraping tool should at least perform two functions: constantly retrieve and check proxy servers. A proxy server allows web scrapers to avoid such protection by changing the requester's IP and preventing further restriction. Still, the basic ones are IP restriction or IP rate limiting, which automatically or manually protects websites from particular IP access. There are plenty of possible data protection mechanisms like fingerprinting, CAPTCHA, Javascript challenges. This means that the data on those pages has some value, which website administrators want to protect from the automated collection. Why do we need a proxy server for web scraping? #Īs you may know, web scraping is the process of extracting data from web pages.

Still, many websites provide free proxy lists, so can the process of getting IP addresses from them be automated? Are free proxies good enough for web scraping? Let's check it out.

A variety of IPs along with their quality make it possible to collect data from various web sites without worrying about being blocked. Using a quality proxy server is the key to a successful web scraper.